You are here:

Improve Predictive Models

Model quality is a critical success factor in predictive AI solutions. AI Models (formerly Einstein Studio) supports continuous, iterative improvement for predictive models. Measure model quality in production over time. Use quality alerts to identify and address areas for improvement. Experiment with new model versions. Efforts to improve model quality result in better business outcomes.

Monitor for Model Drift

In production, models generally become less accurate over time, a phenomenon known as drift. Models drift when characteristics in the real-world data diverge significantly from the training data used to build them. Operational changes, trends, seasonal fluctuations, new or discontinued categories, and other factors can change the composition of your data.

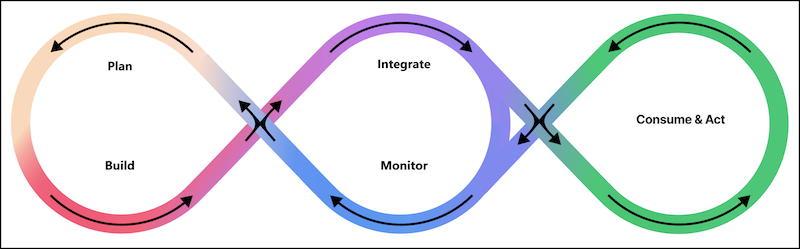

Predictive Model Lifecycle

To keep your models from drifting off course and staying on track, implement the model lifecycle, which involves continuous and iterative operational tasks.

| Phase | Description |

|---|---|

| Plan | Design the first or the next version of the predictive model. Define or refine the model’s predictive target (output) and model features (inputs). |

| Build | Populate the training data in Data 360. In Model Builder, build a version of the predictive model. |

| Integrate | Test, integrate, and activate the predictive model in your solution. |

| Consume & Act | In production, request and consume the output (predictions, prescriptions, and top factors) of the predictive model. Use predictive intelligence to make better decisions and take actions that improve business outcomes. |

| Monitor | Monitor the quality of the predictive model’s output. Measure performance, assess model quality, review quality alerts, and identify improvement opportunities. |

Iterative Refinement and Continuous Improvement

Model implementation is an iterative process rather than a linear one. Model Builder is designed for rapid exploration, experimentation, and implementation. You learn as you go. Every step of the way, you use built-in feedback to check your results, review your assumptions, ask new questions, make adjustments, and try again. Add a column to your training data. Change model settings. Resolve alerts, refine thresholds, and filter data. As you fine-tune your approach, each improvement can help lead you to better operational outcomes.