You are here:

Using Retrieval Augmented Generation

Retrieval Augmented Generation (RAG) in Data 360 is a framework for grounding large language model (LLM) prompts. By augmenting the prompt with accurate, current, and pertinent information, RAG improves the relevance and value of LLM responses for users.

When you submit an LLM prompt, RAG in Data 360:

- Retrieves relevant information from structured and unstructured data indexed in Data Cloud’s vector database

- Augments the prompt by combining this information with the original prompt

- Generates a prompt response

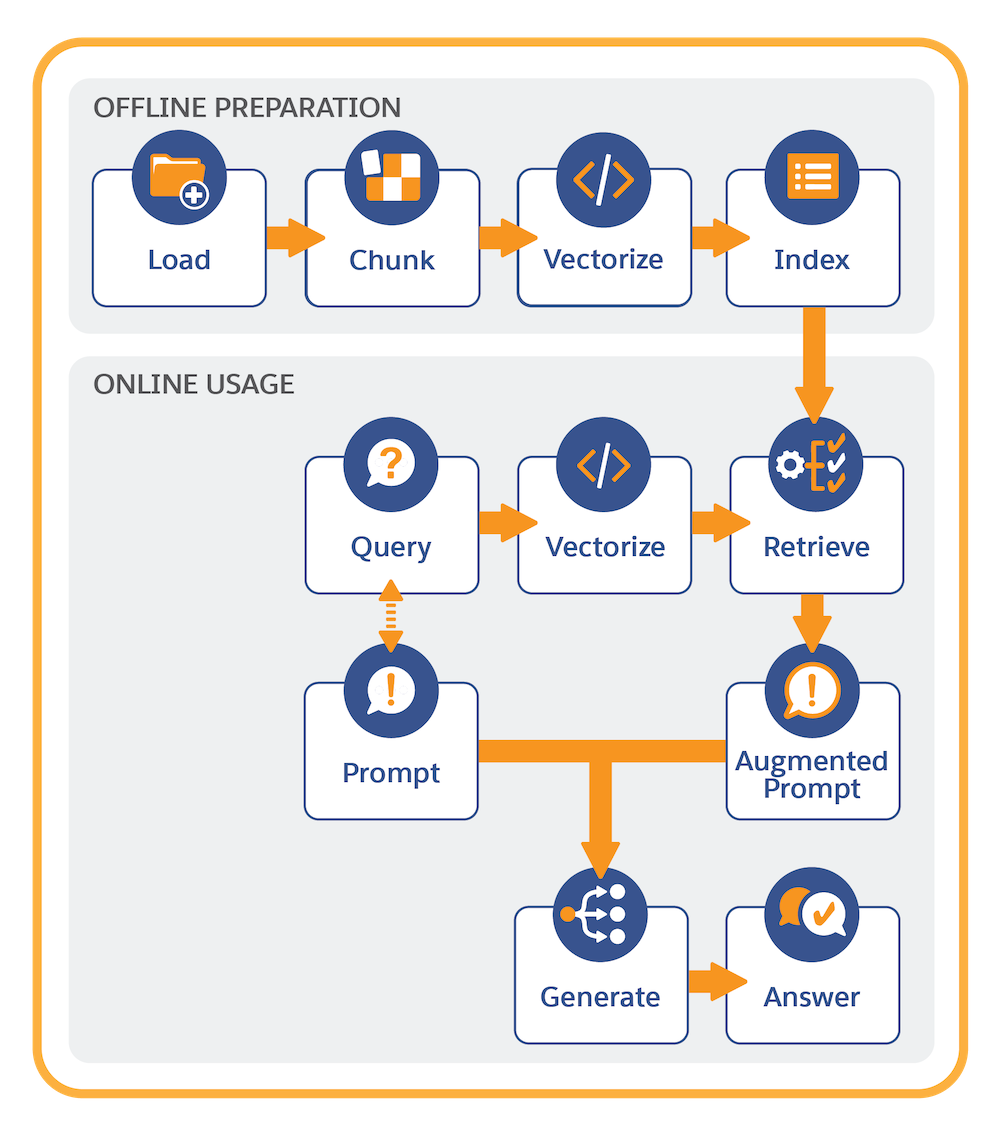

It’s helpful to think of RAG in two main parts: offline preparation and online usage.

Offline Preparation

To implement RAG, start by connecting structured and unstructured data that RAG uses to ground LLM prompts.

Data 360 uses a vector database to manage unstructured data. The vector database contains a collection of structured and unstructured content (a search index) that is indexed in a search-optimized way. Content can be ingested from a variety of sources and file types. Some examples of unstructured content used with RAG include service replies, cases, RFP responses, knowledge articles, emails, and meeting notes.

Offline preparation involves these steps:

- Connect your unstructured data.

- Create a search index configuration to chunk and vectorize the content.

Chunking breaks the text into smaller, semantically meaningful units, and vectorization converts chunks into numeric representations of the text that capture semantic similarities.

- Store and manage the search index in Data 360.

Retrievers serve as the bridge between search indexes and prompt templates. Retrievers further refine the search criteria and retrieve the most relevant information used to augment prompts. To support a variety of use cases, you create custom retrievers in AI Models (formerly Einstein Studio). To learn more, see Retrieve Data.

Online Usage

The final piece of the RAG implementation is to call a retriever in a prompt template. For a given prompt template, the prompt designer can customize retriever query and result settings to populate a prompt with the most relevant information.

Online usage involves these steps:

- The retriever is invoked with a dynamic query initiated from the prompt template.

- The query is vectorized (converted to numeric representations). Vectorization enables search to find semantic matches in the search index.

- The query retrieves the relevant context from Data 360’s vector database.

- The original prompt is populated with the information retrieved from the search index.

- The prompt is submitted to the LLM, which generates and returns the prompt response.

Many LLMs were trained generally across the Internet on older content. With RAG, prompt template users can provide the LLM with the most accurate, up-to-date information, as well as bring proprietary data to the LLM without retraining and fine-tuning the model, creating generated responses that are more pertinent to their context and use case.